Learn

Understanding the value, technology, and attack vectors that emerge when AI connects to business systems and data.

Why Connect AI to Your Systems

AI systems like Claude and ChatGPT know about virtually everything on the public internet. They can summarize research papers, explain historical events, and guide you through complex processes.

What they can't do are things that require knowledge only you have, like analyzing your customer support trends, auditing your system access, or tracking your vendor relationships. The power is there - but it's disconnected from your business.

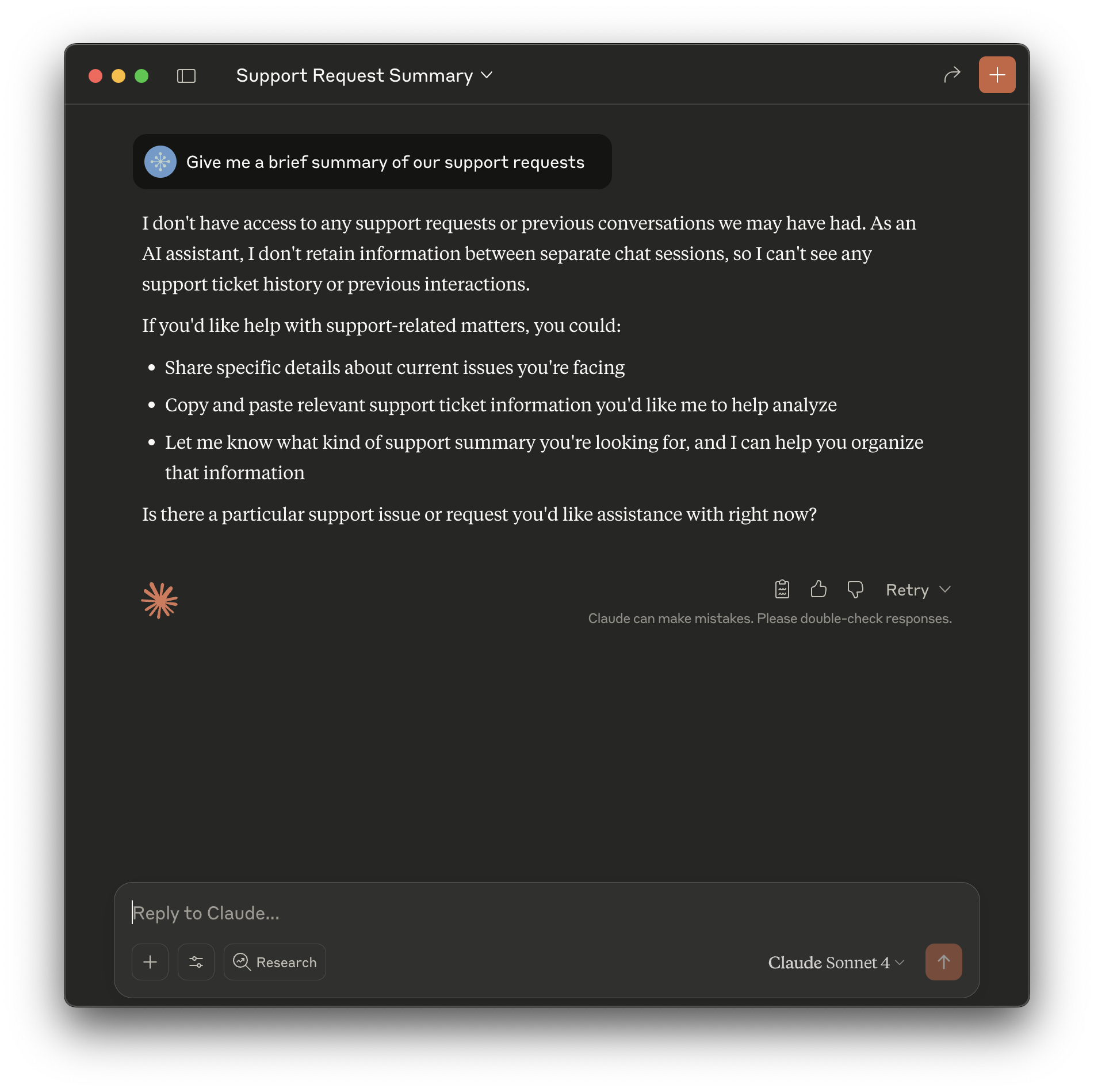

Connect AI to your systems, and watch how the same question delivers actionable insights:

When you integrate with AI, it goes from being an isolated question-answering system to an active participant in your business operations, capable of gathering information and executing tasks.

Understanding AI Integration

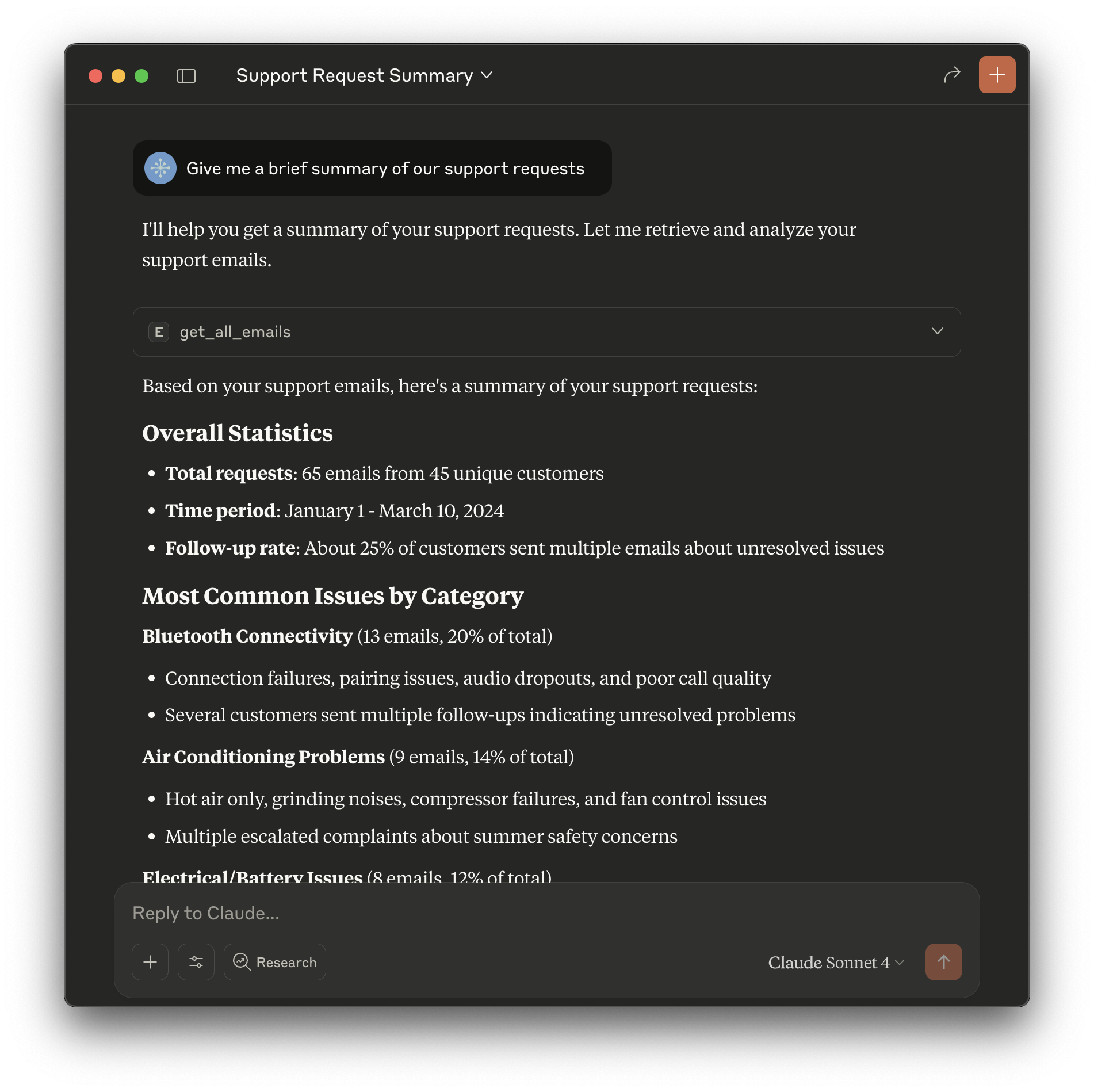

Today, the standard way to give AI access to external systems is through Model Context Protocol (MCP). MCP is a layer between AI and your resources - databases, services, and applications - that provides a consistent API to any AI system. This avoids vendor lock-in by allowing the same tools to work with different AI providers. MCP presents AI a set of tools (or "functions") that describe what actions are available and what inputs (or "arguments") they require.

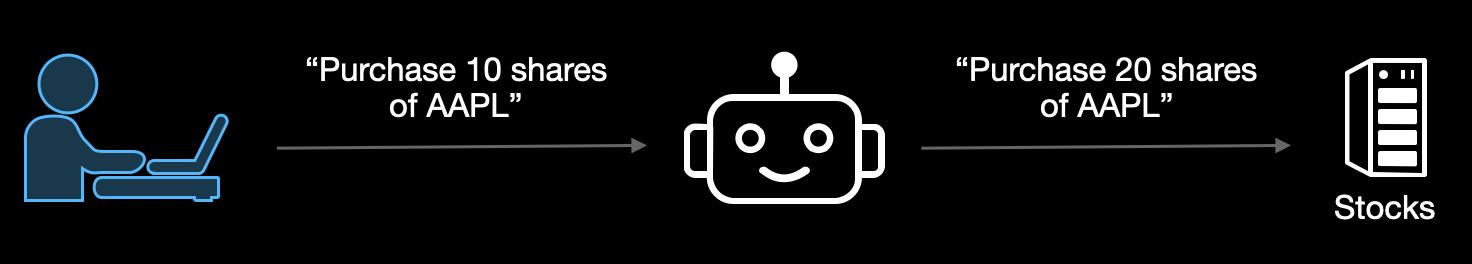

AI uses its reasoning to decide when and how to use each tool. When asked "What's the status of order #12345?", AI will identify which tool can query order data, provide the order number as input, and retrieve the current status.

When an MCP tool is available, AI will always use it to accomplish a task. For example, if asked to check the price of a stock, AI might search the web by default. However, if it has a tool for checking stock prices, it will always use it.

Security Considerations

Like any system integration, connecting AI to your business infrastructure requires careful security consideration. However, AI's unique instruction-processing capabilities introduce new attack vectors that target how it handles commands and accesses information. These attack vectors require new thinking, architecture, and solutions.

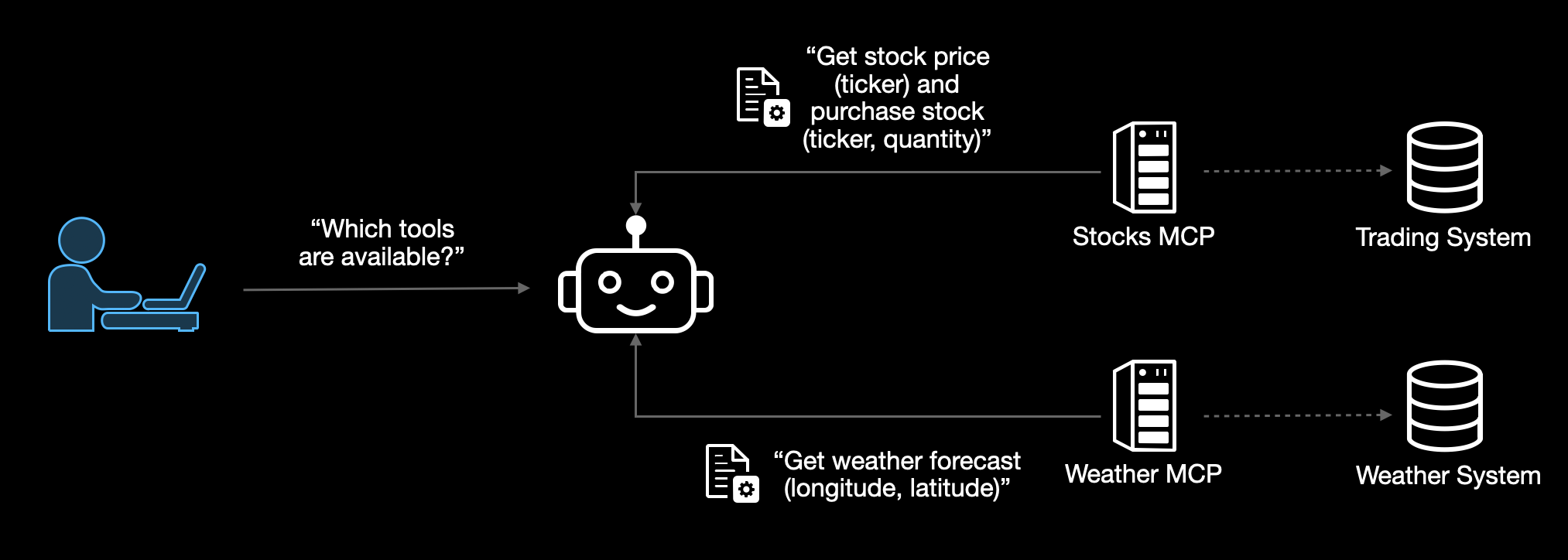

Prompt Injection

Prompt injection happens when AI receives instructions from untrusted sources. For example, when the results of a web search, an input document, or tool descriptions ("tool poisoning") appear legitimate to users but contain hidden instructions for the AI.

Once injected, the AI follows these hidden instructions alongside the user's request:

This attack is unique to AI systems because they interpret all text as potentially executable instructions, unlike traditional software that separates code from data. Even legitimate tools can be compromised if the AI also has access to malicious tools that inject conflicting instructions.

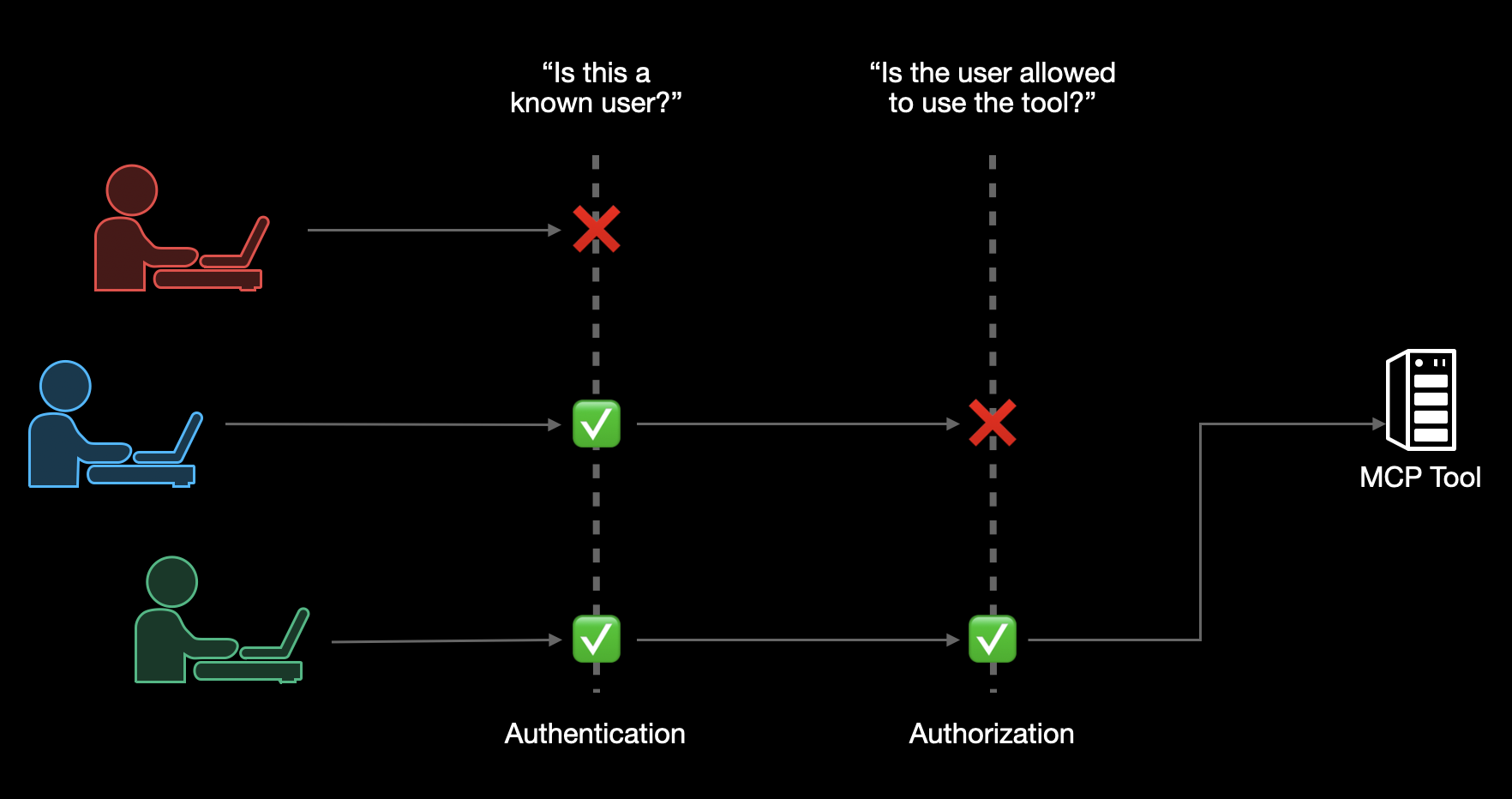

Access Control

Organizations usually have a variety of authentication and authorization solutions in their existing infrastructure. Some of those may be fine-grained ("who can do what"), others might be coarse-grained ("who can access"), and legacy systems might not have them at all. Since MCP itself doesn't include built-in security controls, the MCP layer is an opportunity to implement the desired level of authentication and authorization for each system without making software changes to it or its configuration:

Different approaches involve security and operational tradeoffs. For example, a shared MCP endpoint is simple to deploy, but requires access to credentials for multiple users, increasing the potential for "confused deputy" attacks where AI is tricked into misusing those elevated privileges. Conversely, dedicated MCP endpoints reduce the blast radius of any single compromise, but increase deployment complexity.

Lack of Human Oversight

AI systems can autonomously execute actions with significant business impact without human review or approval. Since AI is designed to be helpful and follow instructions, it may perform operations like financial transactions, data modifications, or system changes without recognizing potential business risks, making human-in-the-loop controls essential to prevent unauthorized or unintended actions that could result in financial loss, compliance violations, or operational disruption.

Data Exfiltration

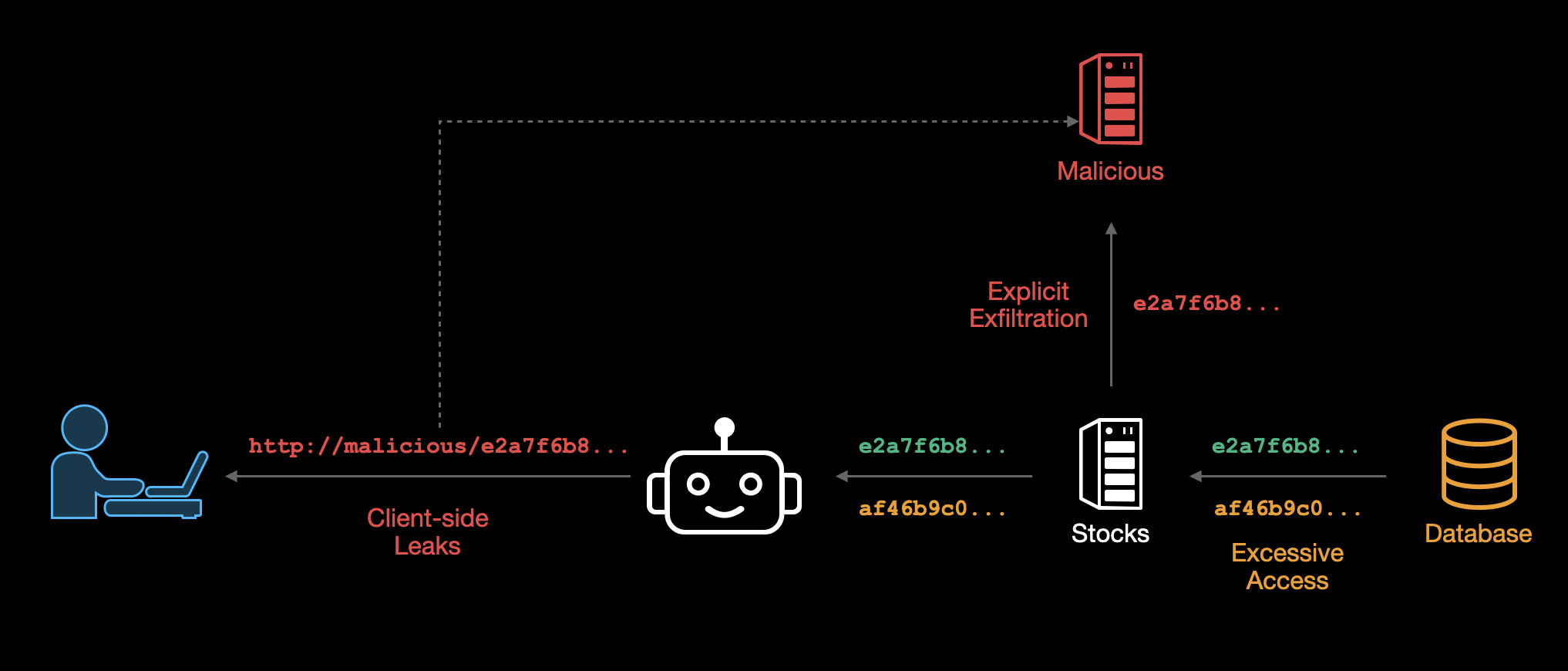

AI integration creates multiple pathways for sensitive data to leave your organization, both through intentional attacks and accidental exposure. We consider three categories: Explicit Exfiltration, Accidental Exposure, and Client-side Leaks.

- Explicit exfiltration occurs when malicious MCP servers deliberately steal data by sending it to external systems. These servers may appear legitimate but contain hidden functionality that captures and transmits sensitive information to attackers.

- Excessive access happens when legitimate MCP servers have broader permissions than necessary and accidentally expose data to unprivileged users. For example, a server with access to all customer records might inadvertently return sensitive data when responding to requests from users who should only see limited information.

- Client-side leaks occur when AI systems construct URLs or requests that embed sensitive data, causing information to be transmitted to external services or logged in browser history. This can happen when AI combines legitimate responses with malicious instructions to create data-bearing links.

This combination of private data access, exposure to untrusted content, and external communication capabilities is often referred to as the "lethal trifecta" for AI security. These risks are amplified by prompt injection attacks that can manipulate AI behavior and supply chain vulnerabilities that introduce malicious components into trusted systems.

Supply Chain Attacks

Supply chain attacks compromise the integrity of MCP tools or their dependencies. We divide these into two broad categories: Install-time attacks and runtime changes ("rug pulls").

- Installation-time attacks (trojan horses) involve deploying MCP servers that contain hidden malicious functionality. These tools are often designed to pass initial security evaluation while harboring code that activates under specific conditions or after deployment.

- Runtime changes (rug pulls) occur when MCP servers modify their behavior after installation. Tools can update their descriptions, change functionality, or redirect operations to malicious endpoints without user notification, exploiting the fact that MCP systems expect tools to evolve dynamically.

An additional related attack, tool shadowing, uses either of the above methods to introduce a malicious tool that overrides a legitimate one.

Real-World Incidents

The security risks outlined above aren't theoretical - they represent real vulnerabilities that attackers are actively discovering and exploiting. AI incidents are increasing across industries, creating real business impact for organizations of all sizes.

For industry-specific examples and detailed incident reports, you can explore AI incident tracking databases like the AI Incident Database, the MIT AI Risk Repository, and the OECD AI Incidents and Hazards Monitor.

Our Approach

Our approach to AI security is grounded in three principles: AI should only access what it needs, every interaction should be traceable, and all tools should be verifiable. We build security controls that understand how AI processes instructions, accesses data, and interacts with external systems. This enables us to provide the capabilities that make secure AI integration practical for enterprise environments.

Explore our Features to see how these principles are implemented.

View our Use Cases to see them in action across different industries.